Hire the Top 2% of

Remote Google Cloud Dataflow Developers

in Brazil

Your trusted source for top remote Google Cloud Dataflow developers — Perfect for startups and enterprises.

Freelance contractors Full-time roles Global teams

656 top Google Cloud Dataflow developers available to hire:

Vetted Google Cloud Dataflow developer in Brazil (UTC-3)

As a Senior Full-Stack Engineer with over 16 years of experience developing full-featured software solutions, I have a robust knowledge of Angular, React, Next.js, NodeJS (Express), Python (FastAPI), and the SDLC from End-to-End, which I use to create innovative services and applications. I have extensive experience using Agile methodologies and the Scrum Framework, and I am passionate about making the most efficient solutions. Technologies: Angular, React, Node, Python, GitHub, REST API, SQL, JavaScript, TypeScript, JQuery, Docker, C#, Java, and more.

Vetted Google Cloud Dataflow developer in Brazil (UTC+2)

I am a Senior Data Engineer with experience in Apache Beam, Spark, Python, Kotlin/Java, and more. I have led teams, migrated AWS accounts, designed data pipelines, and implemented cost-effective solutions. I have also worked on recruitment analytics, built data warehouses, and mentored engineers.

Vetted Google Cloud Dataflow developer in Brazil (UTC-3)

I worked as a Software Engineer and as a Data Engineer on a Data Platform team at a company that stored roughly 10% of all electronic invoices in Brazil. That was billions of records in our databases, and everyday it received tens of millions more. My team was responsible for providing APIs for other engineering teams, both to ingest new records into our platform and to retrieve records based on various filters. These APIs enabled the engineering teams to develop new features for end-users without worrying about business logic, which database the data was stored in, data migrations, data consistency, etc. As a software engineer, I've developed event-driven microservices for real-time data processing and HTTP APIs. My day to day tasks included: * Doing discoveries to find out what the problem is and how to solve it * Designing the solution * Creating the tasks in our backlog * Build the systems with unit and integration tests * Make the CI-CD pipeline * Create alerts and monitor the system after it is deployed to production As a data engineer, I've built batch pipelines to migrate billions of records from one database to another in just a few hours and I would also maintain several Airflow DAGs that triggered ETL pipelines that populated data marts in our data warehouse. I was also Data Engineering Chapter Leader, responsible for tutoring junior data engineers, creating data engineering trainings, diffusing good practices and standards for creating pipelines and organize meetings to discuss technology and tools within the Data Engineering Scope. More recently I was being trained to become a Tech Lead. I took over my Tech Lead's responsabilites when he went on vacation for 20 days, which included talking to our manager to decide which projects to prioritize, help the team plan the weekly tasks, resolve major incidents and plan the next quarter roadmap.

Vetted Google Cloud Dataflow developer in Brazil (UTC-3)

Please, visit my Linkedin for more details: [https://www.linkedin.com/in/marcel-pallete-81166b2b/](https://www.linkedin.com/in/marcel-pallete-81166b2b/) Experienced tech consultant, currently working as Data Tech Lead at Ernst & Young Brazil, focused in: \- SQL, Python, PySpark and Scala Languages \- Data Engineering \- Data Architecture \- ETL pipelines (By code or by ETL Tools like Pentaho, Alteryx, SSIS) \- Big Data (Spark, HDFS, Hive, NiFi, Kafka) \- Tuning and performance \- Azure Cloud (Data Factory, Databricks, Synapse Analytics, SQL DB, Data Lake Gen 2, Stream Analytics) \- Terraform and IaC for Azure Cloud \- Databases (SQL Server, PostgreSQL, Teradata, IBM DB2, MySQL) \- Data Modeling (Erwin, DW, DLH, OLAP/OLTP) \- Business Intelligence and DataViz (Power BI Expert level (M, DAX, DAX Studio, Tabular Editor), Tableau, Looker/Data Studio, Looker and LookML, Qliksense and Qlikview) \- Microsoft Fabric complete solution \- Microsoft Solutions (Power Platform, Power Automate, Sharepoint, Power Apps, Dataverse, Sharepoint)

Google Cloud Dataflow developer in Brazil (UTC-3)

✔ Knowledge of programming languages such as Python and SQL and cloud services such as GCP and AWS; ✔ Experience in ETL tools, such as Apache Airflow, Spark and Apache Beam and data modeling skills; ✔ Knowledge of operating systems, such as Linux; ✔ Experience with business intelligence software.

Google Cloud Dataflow developer in Brazil (UTC-3)

With over a decade and a half of experience in the data field, I have developed a solid expertise across various sectors, always seeking new challenges and accumulating knowledge over the years. I am recognized for my ability to understand client needs and deliver creative and effective solutions to solve complex problems. With advanced skills in data modeling and a deep understanding of business rules, I am able to build data models and solutions that meet the specific needs of the company. My attentive and customer-oriented approach allows me to structure, analyze, and simplify data objectively, providing valuable insights to drive organizational success. I am seeking challenging opportunities in the data field where I can apply my extensive experience and technical skills to develop innovative solutions that drive efficiency and success in data analysis and decision-making processes within the company. I am committed to utilizing all my knowledge, learning ability, and technical leadership to significantly contribute to the growth and advancement of the organization, delivering quality solutions, and adding value to the business.

Google Cloud Dataflow developer in Brazil (UTC-3)

As an experienced Software Engineer, I specialize in cloudbased big data processing, microservices architecture, and the development of large-scale products. Currently, I am part of the dynamic team at Jusbrasil, a platform with an active user base of over 26 million. Our team has successfully navigated numerous challenges inherent to large-scale operations, ensuring the high availability of our services and the consistency of our data. This success is largely attributable to our robust engineering culture. My technical expertise spans a wide range of technologies, including but not limited to: Programming Languages: Python, Javascript, Java, Scala Web Technologies: HTML, CSS, React, GraphQL, REST Data Processing: RabbitMQ, Kafka, Spark, Airflow Containerization & Orchestration: Docker, Kubernetes Databases: MySQL, PostgreSQL, MongoDB, Redis, Big Table, Big Query Version Control & CI/CD: Git, Github, Github Actions, Jenkins Monitoring Tools: Grafana, Prometheus, Loki Project Management: Agile, Scrum

Google Cloud Dataflow developer in Brazil (UTC-3)

I have 17 years of experience in the area of software development, working on implementation projects, migration and development of new solutions in national and multinational companies. I have experience in several types of systems, having already worked with SAP ERP, microservice environments and legacy systems, using several languages such as PHP. ABAP, C++ and NodeJS for their maintenance and implementation.

Vetted Google Cloud Dataflow developer in Brazil (UTC-3)

**Deep Learning Architect (L5) | AWS Generative AI Innovation Center** _São Paulo, Brazil_ With 5+ years experience in AI/ML, I am currently a Deep Learning Architect at AWS Generative AI Innovation Center, blending my expertise in Generative AI science with advanced architectural design to deliver robust, scalable solutions. In my role, which merges the responsibilities of a Data Scientist and a Solutions Architect, I lead customer engagements from proof-of-concept to production-grade systems, ensuring they are secure, fault-tolerant, and optimized for enterprise performance. As a tech lead, I maintain a hands-on approach—contributing to model development, optimization, and deployment—while collaborating with diverse teams to design solutions that drive innovation and disrupt industries. I am seeking long-term opportunities where I can leverage my dual expertise to develop cutting-edge Generative AI solutions and help companies revolutionize their business verticals through transformative technology. ### **Technical Skills** **Artificial Intelligence & Machine Learning** * Expertise in Large Language Models (LLMs), Natural Language Processing (NLP), Computer Vision, Neural Networks, Transformers * Hands-on experience with frameworks: PyTorch, TensorFlow, Scikit-learn **Cloud Computing & DevOps** * 4 AWS Certified (Soultions Architect, Cloud Developer, Machine Learning Specialty, Data Analytics Specialty), Docker, Kubernetes, CircleCI, Elastic Beanstalk, Apache Airflow **Programming & Software Development** * Proficient in Python, R, and SQL * Experienced in building and consuming RESTful APIs and GraphQL **Data Engineering & Big Data** * Skilled in Apache Spark, NoSQL databases (MongoDB, DynamoDB, Elasticsearch) * Experienced in building data pipelines, ETL processes, and optimizing data workflows **Data Analytics & Visualization** * Proficient in Power BI * Proficient Plotly, Bokeh, Matplotlib and Seaborn * Advanced statistical modeling using using R and statsmodels **Soft Skills** * Strong problem-solving ability, Agile/Scrum methodology, proven team leadership, and commitment to continuous learning **Languages** * Portuguese (Native), English (Fluent), French (Fluent), Spanish (Advanced), German (Advanced)

Vetted Google Cloud Dataflow developer in Brazil (UTC-3)

Hands any time! Like a lot to develop Generative AI solutions (API development experience with ChatGPT/OpenAI, Whisper DL, AssemblyAI, DeepL, Bard, Bing, SageMaker) and own algorithms for computer vision and document classification. Proficient in Java, Python, Node.js, React, Angular, C#, .Net, Golang, and more. Experience in Machine Learning, Computer Vision, Engineering Management, Agile, Team Topology, Accelerate, Scaling Up, Scrum, Kanban, Business Strategy, Business Analysis, Software Design, Software Architecture, High Tech Design, Low Tech Design, Cloud Computing, AWS, GCP, Azure, Oracle Cloud, Software as a Service (SaaS), Bank as a Service (BaaS), PaaS, FaaS, Health systems and Manchester protocol, vast knowledge about Payments, Billing & Settlement, KYC/KYP, NFT, Blockchain, Event Driven, TDD, DDD, CQRS, GraphQL, Rest, Kafka, Pub/Sub, Pandas, TensorFlow, OpenCV, Scikit, Kotlin, Spring (Boot/Cloud/Data/Batch), MongoDB, Cassandra, Redis, DynamoDB, Postgres, MS-SQLServer, MySQL, Oracle, Sybase, Kubernetes, Docker, Rancher, CI/CD, Git, TOGAF, Alfresco, Nuxeo, Camunda, BPM, IBM Case Management, Weblogic, Websphere, JBoss, Quarkus, GraalVM, Microservice, SOLID, Design Patterns, NewRelic, ELK, Splunk, Datadog, Terraform, Robot Framework, OAuth, JKS, SSL, HMZ/DMZ, data encryption, caching, Oracle RDBMS, Jira, Confluence, Git, Miro, Figma, Asana, UiPath Robots, UiPath Orchestrator, OCR, TWAIN and so on

Discover more freelance Google Cloud Dataflow developers today

Why choose Arc to hire Google Cloud Dataflow developers

Access vetted talent

Meet Google Cloud Dataflow developers who are fully vetted for domain expertise and English fluency.

View matches in seconds

Stop reviewing 100s of resumes. View Google Cloud Dataflow developers instantly with HireAI.

Save with global hires

Get access to 450,000 talent in 190 countries, saving up to 58% vs traditional hiring.

Get real human support

Feel confident hiring Google Cloud Dataflow developers with hands-on help from our team of expert recruiters.

Why clients hire Google Cloud Dataflow developers with Arc

How to use Arc

1. Tell us your needs

Share with us your goals, budget, job details, and location preferences.

2. Meet top Google Cloud Dataflow developers

Connect directly with your best matches, fully vetted and highly responsive.

3. Hire Google Cloud Dataflow developers

Decide who to hire, and we'll take care of the rest. Enjoy peace of mind with secure freelancer payments and compliant global hires via trusted EOR partners.

Hire Top Remote

Google Cloud Dataflow developers

in Brazil

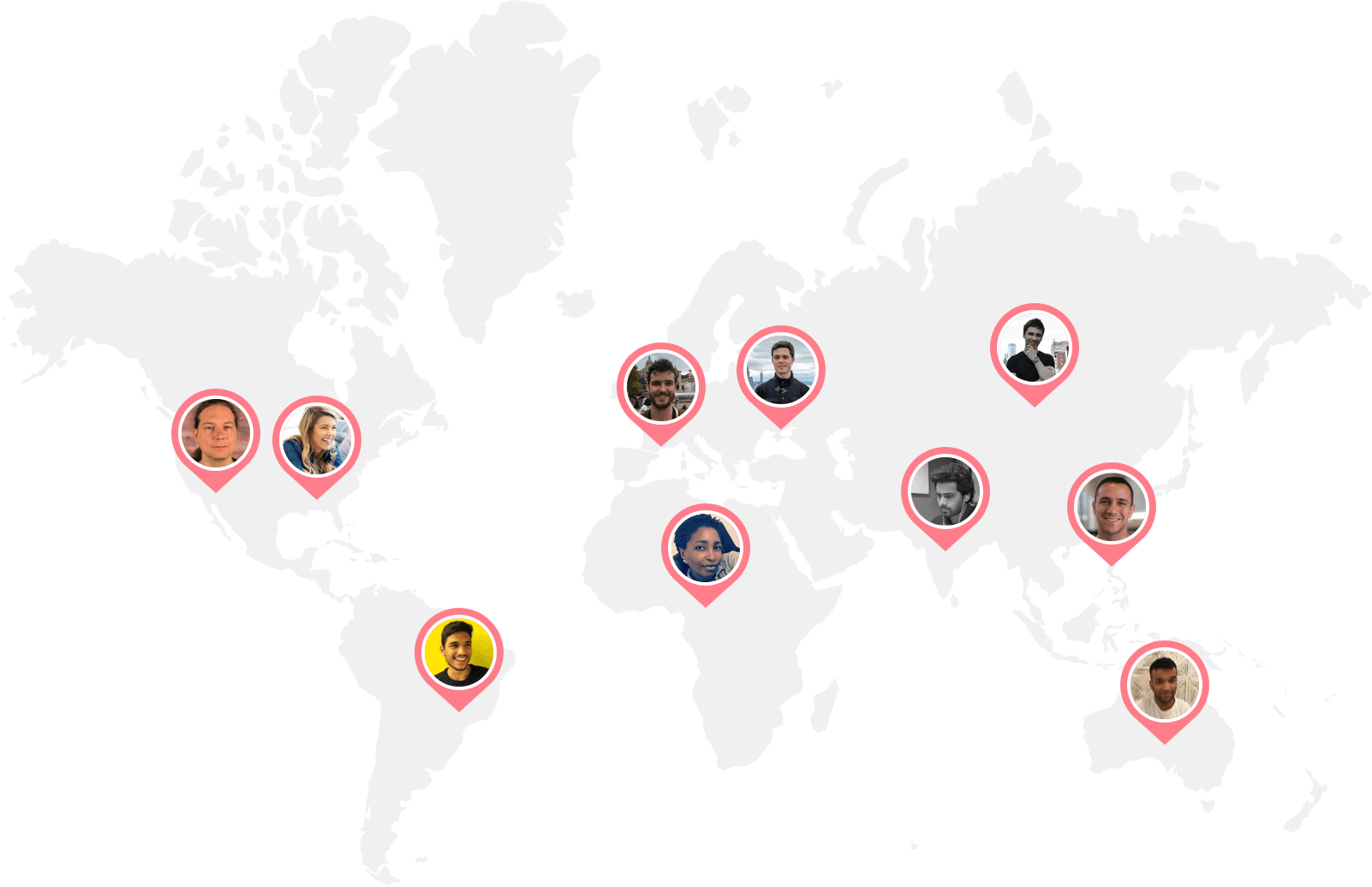

Arc talent

around the world

Arc Google Cloud Dataflow developers

in Brazil

Ready to hire your ideal Google Cloud Dataflow developers?

Get startedHire top software developers in Brazil with Arc

Arc offers pre-vetted remote software developers skilled in every programming language, framework, and technology.

Look through our popular remote developer specializations below.

Build your team of Google Cloud Dataflow developers anywhere

Arc helps you build your team with our network of full-time and freelance Google Cloud Dataflow developers worldwide.

We assist you in assembling your ideal team of programmers in your preferred location and timezone.

- 🇺🇸 United States

- 🇨🇦 Canada

- 🇬🇧 United Kingdom

- 🇩🇪 Germany

- 🇫🇷 France

- 🇳🇱 Netherlands

- 🇦🇺 Australia

- 🇸🇬 Singapore

- 🇷🇴 Romania

- 🇫🇮 Finland

- 🇮🇪 Ireland

- 🇵🇱 Poland

- 🇺🇦 Ukraine

- 🇪🇸 Spain

- 🇮🇹 Italy

- 🇹🇷 Turkey

- 🇸🇪 Sweden

- 🇧🇪 Belgium

- 🇹🇼 Taiwan

- 🇯🇵 Japan

- 🇲🇽 Mexico

- 🇦🇪 United Arab Emirates

- 🇦🇷 Argentina

- 🇧🇷 Brazil

- 🇨🇱 Chile

- 🇨🇳 China

- 🇨🇴 Colombia

- 🇪🇨 Ecuador

- 🇮🇱 Israel

- 🇲🇾 Malaysia

- 🇵🇰 Pakistan

- 🇹🇭 Thailand

- 🇺🇾 Uruguay

- 🇻🇳 Vietnam

- 🇰🇪 Kenya

- 🇿🇦 South Africa

- 🇳🇬 Nigeria

- 🇲🇦 Morocco

- 🇪🇬 Egypt

- 🇮🇩 Indonesia

- 🇵🇭 Philippines

- 🇮🇳 India

FAQs

Why hire a Google Cloud Dataflow developer?

In today’s world, most companies have code-based needs that require developers to help build and maintain. For instance, if your business has a website or an app, you’ll need to keep it updated to ensure you continue to provide positive user experiences. At times, you may even need to revamp your website or app. This is where hiring a developer becomes crucial.

Depending on the stage and scale of your product and services, you may need to hire a Google Cloud Dataflow developer, multiple engineers, or even a full remote developer team to help keep your business running. If you’re a startup or a company running a website, your product will likely grow out of its original skeletal structure. Hiring full-time remote Google Cloud Dataflow developers can help keep your website up-to-date.

How do I hire Google Cloud Dataflow developers?

To hire a Google Cloud Dataflow developer, you need to go through a hiring process of defining your needs, posting a job description, screening resumes, conducting interviews, testing candidates’ skills, checking references, and making an offer.

Arc offers three services to help you hire Google Cloud Dataflow developers effectively and efficiently. Hire full-time Google Cloud Dataflow developers from a vetted candidates pool, with new options every two weeks, and pay through prepaid packages or per hire. Alternatively, hire the top 2.3% of expert freelance Google Cloud Dataflow developers in 72 hours, with weekly payments.

If you’re not ready to commit to the paid plans, our free job posting service is for you. By posting your job on Arc, you can reach up to 450,000 developers around the world. With that said, the free plan will not give you access to pre-vetted Google Cloud Dataflow developers.

Furthermore, we’ve partnered with compliance and payroll platforms Deel and Remote to make paperwork and hiring across borders easier. This way, you can focus on finding the right Google Cloud Dataflow developers for your company, and let Arc handle the logistics.

Where do I hire the best remote Google Cloud Dataflow developers?

There are two types of platforms you can hire Google Cloud Dataflow developers from: general and niche marketplaces. General platforms like Upwork, Fiverr, and Gigster offer a variety of non-vetted talents unlimited to developers. While you can find Google Cloud Dataflow developers on general platforms, top tech talents generally avoid general marketplaces in order to escape bidding wars.

If you’re looking to hire the best remote Google Cloud Dataflow developers, consider niche platforms like Arc that naturally attract and carefully vet their Google Cloud Dataflow developers for hire. This way, you’ll save time and related hiring costs by only interviewing the most suitable remote Google Cloud Dataflow developers.

Some factors to consider when you hire Google Cloud Dataflow developers include the platform’s specialty, developer’s geographical location, and the service’s customer support. Depending on your hiring budget, you may also want to compare the pricing and fee structure.

Make sure to list out all of the important factors when you compare and decide on which remote developer job board and platform to use to find Google Cloud Dataflow developers for hire.

How do I write a Google Cloud Dataflow developer job description?

Writing a good Google Cloud Dataflow developer job description is crucial in helping you hire Google Cloud Dataflow developers that your company needs. A job description’s key elements include a clear job title, a brief company overview, a summary of the role, the required duties and responsibilities, and necessary and preferred experience. To attract top talent, it's also helpful to list other perks and benefits, such as flexible hours and health coverage.

Crafting a compelling job title is critical as it's the first thing that job seekers see. It should offer enough information to grab their attention and include details on the seniority level, type, and area or sub-field of the position.

Your company description should succinctly outline what makes your company unique to compete with other potential employers. The role summary for your remote Google Cloud Dataflow developer should be concise and read like an elevator pitch for the position, while the duties and responsibilities should be outlined using bullet points that cover daily activities, tech stacks, tools, and processes used.

For a comprehensive guide on how to write an attractive job description to help you hire Google Cloud Dataflow developers, read our Software Engineer Job Description Guide & Templates.

What skills should I look for in a Google Cloud Dataflow developer?

The top five technical skills Google Cloud Dataflow developers should possess include proficiency in programming languages, understanding data structures and algorithms, experience with databases, familiarity with version control systems, and knowledge of software testing and debugging.

Meanwhile, the top five soft skills are communication, problem-solving, time management, attention to detail, and adaptability. Effective communication is essential for coordinating with clients and team members, while problem-solving skills enable Google Cloud Dataflow developers to analyze issues and come up with effective solutions. Time management skills are important to ensure projects are completed on schedule, while attention to detail helps to catch and correct issues before they become bigger problems. Finally, adaptability is crucial for Google Cloud Dataflow developers to keep up with evolving technology and requirements.

What kinds of Google Cloud Dataflow developers are available for hire through Arc?

You can find a variety of Google Cloud Dataflow developers for hire on Arc! At Arc, you can hire on a freelance, full-time, part-time, or contract-to-hire basis. For freelance Google Cloud Dataflow developers, Arc matches you with the right senior developer in roughly 72 hours. As for full-time remote Google Cloud Dataflow developers for hire, you can expect to make a successful hire in 14 days. To extend a freelance engagement to a full-time hire, a contract-to-hire fee will apply.

In addition to a variety of engagement types, Arc also offers a wide range of developers located in different geographical locations, such as Latin America and Eastern Europe. Depending on your needs, Arc offers a global network of skilled software engineers in various different time zones and countries for you to choose from.

Lastly, our remote-ready Google Cloud Dataflow developers for hire are all mid-level and senior-level professionals. They are ready to start coding straight away, anytime, anywhere.

Why is Arc the best choice for hiring Google Cloud Dataflow developers?

Arc is trusted by hundreds of startups and tech companies around the world, and we’ve matched thousands of skilled Google Cloud Dataflow developers with both freelance and full-time jobs. We’ve successfully helped Silicon Valley startups and larger tech companies like Spotify and Automattic hire Google Cloud Dataflow developers.

Every Google Cloud Dataflow developer for hire in our network goes through a vetting process to verify their communication abilities, remote work readiness, and technical skills. Additionally, HireAI, our GPT-4-powered AI recruiter, enables you to get instant candidate matches without searching and screening.

Not only can you expect to find the most qualified Google Cloud Dataflow developer on Arc, but you can also count on your account manager and the support team to make each hire a success. Enjoy a streamlined hiring experience with Arc, where we provide you with the developer you need, and take care of the logistics so you don’t need to.

How does Arc vet a Google Cloud Dataflow developer's skills?

Arc has a rigorous and transparent vetting process for all types of developers. To become a vetted Google Cloud Dataflow developer for hire on Arc, developers must pass a profile screening, complete a behavioral interview, and pass a technical interview or pair programming.

While Arc has a strict vetting process for its verified Google Cloud Dataflow developers, if you’re using Arc’s free job posting plan, you will only have access to non-vetted developers. If you’re using Arc to hire Google Cloud Dataflow developers, you can rest assured that all remote Google Cloud Dataflow developers have been thoroughly vetted for the high-caliber communication and technical skills you need in a successful hire.

How long does it take to find Google Cloud Dataflow developers on Arc?

Arc pre-screens all of our remote Google Cloud Dataflow developers before we present them to you. As such, all the remote Google Cloud Dataflow developers you see on your Arc dashboard are interview-ready candidates who make up the top 2% of applicants who pass our technical and communication assessment. You can expect the interview process to happen within days of posting your jobs to 450,000 candidates. You can also expect to hire a freelance Google Cloud Dataflow developer in 72 hours, or find a full-time Google Cloud Dataflow developer that fits your company’s needs in 14 days.

Here’s a quote from Philip, the Director of Engineering at Chegg:

“The biggest advantage and benefit of working with Arc is the tremendous reduction in time spent sourcing quality candidates. We’re able to identify the talent in a matter of days.”

Find out more about how Arc successfully helped our partners in hiring remote Google Cloud Dataflow developers.

How much does a freelance Google Cloud Dataflow developer charge per hour?

Depending on the freelance developer job board you use, freelance remote Google Cloud Dataflow developers' hourly rates can vary drastically. For instance, if you're looking on general marketplaces like Upwork and Fiverr, you can find Google Cloud Dataflow developers for hire at as low as $10 per hour. However, high-quality freelance developers often avoid general freelance platforms like Fiverr to avoid the bidding wars.

When you hire Google Cloud Dataflow developers through Arc, they typically charge between $60-100+/hour (USD). To get a better understanding of contract costs, check out our freelance developer rate explorer.

How much does it cost to hire a full time Google Cloud Dataflow developer?

According to the U.S. Bureau of Labor Statistics, the medium annual wage for software developers in the U.S. was $120,730 in May 2021. What this amounts to is around $70-100 per hour. Note that this does not include the direct cost of hiring, which totals to about $4000 per new recruit, according to Glassdoor.

Your remote Google Cloud Dataflow developer’s annual salary may differ dramatically depending on their years of experience, related technical skills, education, and country of residence. For instance, if the developer is located in Eastern Europe or Latin America, the hourly rate for developers will be around $75-95 per hour.

For more frequently asked questions on hiring Google Cloud Dataflow developers, check out our FAQs page.