Hire the Top 2% of

Remote Llm Inference Tuning Developers

in India

Your trusted source for hiring top Llm inference tuning developers, engineers, experts, programmers, freelancers, coders, contractors, and consultants in India — Perfect for startups and enterprises.

Freelance contractors Full-time roles Global teams

148 Remote Llm inference tuning developers and experts available to hire:

Remote Llm inference tuning developer in India (UTC+6)

I am Ajith from Bangalore, India. I have completed my Master of Technology in Robotics and Automation from CET Trivandrum. Currently, I serve as a Research Engineer in a startup specializing in AI, where I have gained around 7 years of invaluable experience. I have prior research and development experience, both from the academia and the industry. Combining my academic background and research experience, I am confident in my abilities to contribute in research and help the industry to take a step forward towards a better solution. I am eager to discuss my qualifications in more detail and to learn more about the opportunity. Please feel free to contact me to set up a time to chat. I look forward to hearing from you. Thank you very much for your patience and consideration.

Vetted Llm inference tuning developer in India (UTC+6)

Hi, I’m Lovish — a seasoned software developer and tech leader with 9 years of experience collaborating with startups and founders. I specialize in mobile development, AI, data analytics, product strategy, and team building. If you’re looking for a versatile expert who can code, lead, and deliver, let’s connect.

Remote Llm inference tuning developer in India (UTC-7)

**AI and NLP Researcher with a PhD degree, Seeking Exciting Opportunities. Specializing in Social Computing & Human-Centered AI, Open to Global Opportunities for Advancement. Jobseeker, currently living at the Indian Institute of Technology Patna, India.**

Remote Llm inference tuning developer in India (UTC+6)

AI Engineer with over 4 years of experience designing and deploying scalable computer vision and machine learning solutions. Skilled in Python, PyTorch, Docker, and MLOps workflows. Strong focus on real-time edge AI deployments and back-end engineering using Django. Interested in generative AI systems and efficient orchestration.

Vetted Llm inference tuning developer in India (UTC+6)

I am a Senior Data Scientist with over 9 years of experience in leveraging advanced AI, machine learning, and deep learning techniques to solve complex business challenges across industries like banking, retail, manufacturing, and technology. My expertise lies in designing scalable solutions that drive measurable outcomes, optimize processes, and empower organizations to make data-driven decisions. Currently, at JPMorgan Chase & Co., I lead initiatives that have delivered significant business value, including developing an unsupervised payment risk detection model that mitigated fraud risks and saved $24M globally. I also spearheaded the creation of a Retrieval-Augmented Generation (RAG) framework to enhance enterprise-wide information retrieval and knowledge generation. My work on standardizing LLM evaluation frameworks has improved cross-functional collaboration and ensured consistency in model performance metrics. Previously, at Walmart Global Tech India, I contributed to sustainability goals by building multi-model machine learning systems for EV charging station recommendations while driving store revenue growth. I also implemented transformer-based models for product categorization and price standardization. At Tiger Analytics, I developed real-time predictive maintenance systems that saved $50M annually for a global steel manufacturer and improved inventory forecasting accuracy by over 20%. With a strong foundation in programming languages like Python and SQL, libraries such as PyTorch and LangChain, and expertise in cloud platforms like AWS SageMaker and GCP BigQuery, I specialize in cutting-edge technologies like Large Language Models (LLMs), Natural Language Processing (NLP), anomaly detection, and MLOps. My academic projects further showcase my ability to innovate in areas like federated learning for DocVQA, RAG frameworks for better retrieval-generation pipelines, and prompt compression for optimized token utilization. Beyond my professional achievements, I am dedicated to mentoring aspiring AI professionals through Scaler Academy and sharing insights as a tech blogger at KnowledgeHut. My mission is to harness the power of AI to create meaningful impact while fostering growth in the AI community.

Vetted Llm inference tuning developer in India (UTC-7)

Senior Software Engineer specializing in large scale distributed application development and artificial intelligence, backed by 6 years of experience. Recognized for architecting scalable solutions, leading high-impact projects, and mentoring talent.

Vetted Llm inference tuning developer in India (UTC+6)

I am a skilled Software Engineer with experience in implementing data privacy practices, developing multi-tenancy architecture, and designing high-performance portals. Proficient in Java, Cloud, Spring Boot, Microservices, and Machine Learning.

Vetted Llm inference tuning developer in India (UTC+6)

With over 5 years in the IT industry, I specialize in Storage Software Development and am currently a Software Developer at Scale AI. My role involves training AI models using Python, where I apply my deep understanding of RAG applications and Large Language Models (LLMs). I excel at evaluating and selecting the right LLMs for different use cases and deploying data science models into production. I bring strong analytical and problem-solving skills to the table and have a proven track record of working effectively with business teams. I am proficient in Python and C and have a solid foundation in Volume Management, Clustering, and various storage and networking protocols.

Vetted Llm inference tuning developer in India (UTC+6)

Data Scientist with 6.5 years of expertise in ML, NLP, and deep learning. Proficient in Python, R, and SQL, skilled in intricate data analysis, visualization, and statistical modeling. Proficiency in Generative AI tools like LangChain, seamlessly integrating it with ChatGPT, Pinecone, LLAMA 2, and Hugging Face for dynamic language-based applications. Strong problem-solving abilities, excelling in fast-paced environments.

Vetted Llm inference tuning developer in India (UTC+6)

**MACHINE LEARNING ENGINEER with +7 years of experience.** At MediaRadar, Inc., our team has propelled machine learning innovation, developing a Siamese-based model that significantly increased top-10 accuracy and acceptance rates. With a Bachelor's degree in Mechanical Engineering from the Indian Institute of Technology, Jodhpur, I've honed my technical acumen to specialize in Large Language Models (LLM) and Machine Learning Algorithms, integrating them to enrich ads data and augment datasets for improved model generalization. My journey is marked by a relentless pursuit of excellence and collaboration. Building on my foundational programming skills certified by LinkedIn and Coursera, my current focus lies in optimizing ML pipelines and leveraging GPT to automate processes. Such initiatives not only enhance performance but also drive the transformative power of AI to the forefront of ad tech industry innovation.

Discover more freelance Llm inference tuning developers today

Why Arc to find a Llm inference tuning developer for hire

Access vetted Llm inference tuning developers

Meet dedicated Llm inference tuning developers who are fully vetted for domain expertise and English fluency.

View matches in seconds

Stop reviewing 100s of resumes. View Llm inference tuning developers instantly with HireAI.

Save with global hires

Get access to 450,000 talent in 190 countries, saving up to 58% vs traditional hiring.

Get real human support

Feel confident hiring Llm inference tuning developers with hands-on help from our team of expert recruiters.

Why clients hire Llm inference tuning developers with Arc

How to use Arc

1. Tell us your needs

Share with us your goals, budget, job details, and location preferences.

2. Meet top Llm inference tuning developers

Connect directly with your best matches, fully vetted and highly responsive.

3. Hire Llm inference tuning developers

Decide who to hire, and we'll take care of the rest. Enjoy peace of mind with secure freelancer payments and compliant global hires via trusted EOR partners.

Hire Top Remote

Llm inference tuning developers

in India

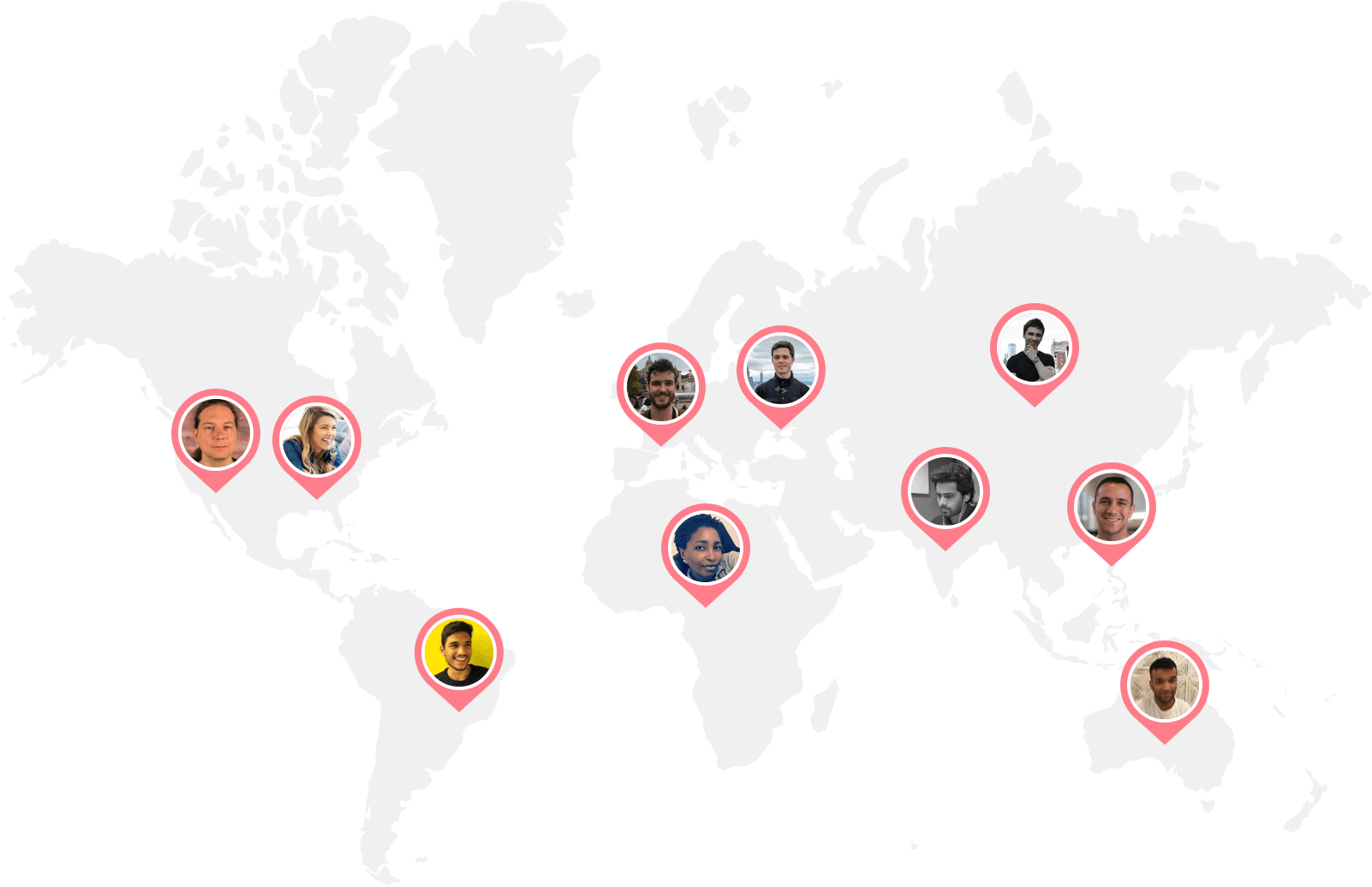

Arc talent

around the world

Arc Llm inference tuning developers

in India

Ready to hire your ideal Llm inference tuning developers?

Get startedTop remote developers are just a few clicks away

Arc offers pre-vetted remote developers skilled in every programming language, framework, and technology. Look through our popular remote developer specializations below.

Find and hire developers by role / expertise

Find and hire engineers by skills

Build your team of Llm inference tuning developers anywhere

Arc helps you build your team with our network of full-time and freelance Llm inference tuning developers worldwide.

We assist you in assembling your ideal team of programmers in your preferred location and timezone.

FAQs

Why hire a Llm inference tuning developer?

In today’s world, most companies have code-based needs that require developers to help build and maintain. For instance, if your business has a website or an app, you’ll need to keep it updated to ensure you continue to provide positive user experiences. At times, you may even need to revamp your website or app. This is where hiring a developer becomes crucial.

Depending on the stage and scale of your product and services, you may need to hire a Llm inference tuning developer, multiple engineers, or even a full remote developer team to help keep your business running. If you’re a startup or a company running a website, your product will likely grow out of its original skeletal structure. Hiring full-time remote Llm inference tuning developers can help keep your website up-to-date.

How do I hire Llm inference tuning developers?

To hire a Llm inference tuning developer, you need to go through a hiring process of defining your needs, posting a job description, screening resumes, conducting interviews, testing candidates’ skills, checking references, and making an offer.

Arc offers three services to help you hire Llm inference tuning developers effectively and efficiently. Hire full-time Llm inference tuning developers from a vetted candidates pool, with new options every two weeks, and pay through prepaid packages or per hire. Alternatively, hire the top 2.3% of expert freelance Llm inference tuning developers in 72 hours, with weekly payments.

If you’re not ready to commit to the paid plans, our free job posting service is for you. By posting your job on Arc, you can reach up to 450,000 developers around the world. With that said, the free plan will not give you access to pre-vetted Llm inference tuning developers.

Furthermore, we’ve partnered with compliance and payroll platforms Deel and Remote to make paperwork and hiring across borders easier. This way, you can focus on finding the right Llm inference tuning developers for your company, and let Arc handle the logistics.

Where do I hire the best remote Llm inference tuning developers?

There are two types of platforms you can hire Llm inference tuning developers from: general and niche marketplaces. General platforms like Upwork, Fiverr, and Gigster offer a variety of non-vetted talents unlimited to developers. While you can find Llm inference tuning developers on general platforms, top tech talents generally avoid general marketplaces in order to escape bidding wars.

If you’re looking to hire the best remote Llm inference tuning developers, consider niche platforms like Arc that naturally attract and carefully vet their Llm inference tuning developers for hire. This way, you’ll save time and related hiring costs by only interviewing the most suitable remote Llm inference tuning developers.

Some factors to consider when you hire Llm inference tuning developers include the platform’s specialty, developer’s geographical location, and the service’s customer support. Depending on your hiring budget, you may also want to compare the pricing and fee structure.

Make sure to list out all of the important factors when you compare and decide on which remote developer job board and platform to use to find Llm inference tuning developers for hire.

How do I write a Llm inference tuning developer job description?

Writing a good Llm inference tuning developer job description is crucial in helping you hire Llm inference tuning developers that your company needs. A job description’s key elements include a clear job title, a brief company overview, a summary of the role, the required duties and responsibilities, and necessary and preferred experience. To attract top talent, it's also helpful to list other perks and benefits, such as flexible hours and health coverage.

Crafting a compelling job title is critical as it's the first thing that job seekers see. It should offer enough information to grab their attention and include details on the seniority level, type, and area or sub-field of the position.

Your company description should succinctly outline what makes your company unique to compete with other potential employers. The role summary for your remote Llm inference tuning developer should be concise and read like an elevator pitch for the position, while the duties and responsibilities should be outlined using bullet points that cover daily activities, tech stacks, tools, and processes used.

For a comprehensive guide on how to write an attractive job description to help you hire Llm inference tuning developers, read our Engineer Job Description Guide & Templates.

What skills should I look for in a Llm inference tuning developer?

The top five technical skills Llm inference tuning developers should possess include proficiency in programming languages, understanding data structures and algorithms, experience with databases, familiarity with version control systems, and knowledge of testing and debugging.

Meanwhile, the top five soft skills are communication, problem-solving, time management, attention to detail, and adaptability. Effective communication is essential for coordinating with clients and team members, while problem-solving skills enable Llm inference tuning developers to analyze issues and come up with effective solutions. Time management skills are important to ensure projects are completed on schedule, while attention to detail helps to catch and correct issues before they become bigger problems. Finally, adaptability is crucial for Llm inference tuning developers to keep up with evolving technology and requirements.

What kinds of Llm inference tuning developers are available for hire through Arc?

You can find a variety of Llm inference tuning developers for hire on Arc! At Arc, you can hire on a freelance, full-time, part-time, or contract-to-hire basis. For freelance Llm inference tuning developers, Arc matches you with the right senior developer in roughly 72 hours. As for full-time remote Llm inference tuning developers for hire, you can expect to make a successful hire in 14 days. To extend a freelance engagement to a full-time hire, a contract-to-hire fee will apply.

In addition to a variety of engagement types, Arc also offers a wide range of developers located in different geographical locations, such as Latin America and Eastern Europe. Depending on your needs, Arc offers a global network of skilled engineers in various different time zones and countries for you to choose from.

Lastly, our remote-ready Llm inference tuning developers for hire are all mid-level and senior-level professionals. They are ready to start coding straight away, anytime, anywhere.

Why is Arc the best choice for hiring Llm inference tuning developers?

Arc is trusted by hundreds of startups and tech companies around the world, and we’ve matched thousands of skilled Llm inference tuning developers with both freelance and full-time jobs. We’ve successfully helped Silicon Valley startups and larger tech companies like Spotify and Automattic hire Llm inference tuning developers.

Every Llm inference tuning developer for hire in our network goes through a vetting process to verify their communication abilities, remote work readiness, and technical skills. Additionally, HireAI, our GPT-4-powered AI recruiter, enables you to get instant candidate matches without searching and screening.

Not only can you expect to find the most qualified Llm inference tuning developer on Arc, but you can also count on your account manager and the support team to make each hire a success. Enjoy a streamlined hiring experience with Arc, where we provide you with the developer you need, and take care of the logistics so you don’t need to.

How does Arc vet a Llm inference tuning developer's skills?

Arc has a rigorous and transparent vetting process for all types of developers. To become a vetted Llm inference tuning developer for hire on Arc, developers must pass a profile screening, complete a behavioral interview, and pass a technical interview or pair programming.

While Arc has a strict vetting process for its verified Llm inference tuning developers, if you’re using Arc’s free job posting plan, you will only have access to non-vetted developers. If you’re using Arc to hire Llm inference tuning developers, you can rest assured that all remote Llm inference tuning developers have been thoroughly vetted for the high-caliber communication and technical skills you need in a successful hire.

How long does it take to find Llm inference tuning developers on Arc?

Arc pre-screens all of our remote Llm inference tuning developers before we present them to you. As such, all the remote Llm inference tuning developers you see on your Arc dashboard are interview-ready candidates who make up the top 2% of applicants who pass our technical and communication assessment. You can expect the interview process to happen within days of posting your jobs to 450,000 candidates. You can also expect to hire a freelance Llm inference tuning developer in 72 hours, or find a full-time Llm inference tuning developer that fits your company’s needs in 14 days.

Here’s a quote from Philip, the Director of Engineering at Chegg:

“The biggest advantage and benefit of working with Arc is the tremendous reduction in time spent sourcing quality candidates. We’re able to identify the talent in a matter of days.”

Find out more about how Arc successfully helped our partners in hiring remote Llm inference tuning developers.

How much does a freelance Llm inference tuning developer charge per hour?

Depending on the freelance developer job board you use, freelance remote Llm inference tuning developers' hourly rates can vary drastically. For instance, if you're looking on general marketplaces like Upwork and Fiverr, you can find Llm inference tuning developers for hire at as low as $10 per hour. However, high-quality freelance developers often avoid general freelance platforms like Fiverr to avoid the bidding wars.

When you hire Llm inference tuning developers through Arc, they typically charge between $60-100+/hour (USD). To get a better understanding of contract costs, check out our freelance developer rate explorer.

How much does it cost to hire a full time Llm inference tuning developer?

According to the U.S. Bureau of Labor Statistics, the medium annual wage for developers in the U.S. was $120,730 in May 2021. What this amounts to is around $70-100 per hour. Note that this does not include the direct cost of hiring, which totals to about $4000 per new recruit, according to Glassdoor.

Your remote Llm inference tuning developer’s annual salary may differ dramatically depending on their years of experience, related technical skills, education, and country of residence. For instance, if the developer is located in Eastern Europe or Latin America, the hourly rate for developers will be around $75-95 per hour.

For more frequently asked questions on hiring Llm inference tuning developers, check out our FAQs page.